How To Create A Table Using Sql In Access

This document describes how to create and use standard (built-in) tables in BigQuery. For information about creating other table types, see:

- Creating partitioned tables

- Creating and using clustered tables

After creating a table, you can:

- Control access to your table data

- Get information about your tables

- List the tables in a dataset

- Get table metadata

For more information about managing tables including updating table properties, copying a table, and deleting a table, see Managing tables.

Before you begin

Before creating a table in BigQuery, first:

- Set up a project by following a BigQuery getting started guide.

- Create a BigQuery dataset.

- Optionally, read Introduction to tables to understand table limitations, quotas, and pricing.

Table naming

When you create a table in BigQuery, the table name must be unique per dataset. The table name can:

- Contain up to 1,024 characters.

- Contain Unicode characters in category L (letter), M (mark), N (number), Pc (connector, including underscore), Pd (dash), Zs (space). For more information, see General Category.

For example, the following are all valid table names: table 01, ग्राहक, 00_お客様, étudiant-01.

Some table names and table name prefixes are reserved. If you receive an error saying that your table name or prefix is reserved, then select a different name and try again.

Creating a table

You can create a table in BigQuery in the following ways:

- Manually using the Cloud Console or the

bqcommand-line toolbq mkcommand. - Programmatically by calling the

tables.insertAPI method. - By using the client libraries.

- From query results.

- By defining a table that references an external data source.

- When you load data.

- By using a

CREATE TABLEdata definition language (DDL) statement.

Required permissions

To create a table, you need the following IAM permissions:

-

bigquery.tables.create -

bigquery.tables.updateData -

bigquery.jobs.create

Additionally, you might require the bigquery.tables.getData permission to access the data that you write to the table.

Each of the following predefined IAM roles includes the permissions that you need in order to create a table:

-

roles/bigquery.dataEditor -

roles/bigquery.dataOwner -

roles/bigquery.admin(includes thebigquery.jobs.createpermission) -

roles/bigquery.user(includes thebigquery.jobs.createpermission) -

roles/bigquery.jobUser(includes thebigquery.jobs.createpermission)

Additionally, if you have the bigquery.datasets.create permission, you can create and update tables in the datasets that you create.

For more information on IAM roles and permissions in BigQuery, see Predefined roles and permissions.

Creating an empty table with a schema definition

You can create an empty table with a schema definition in the following ways:

- Enter the schema using the Cloud Console.

- Provide the schema inline using the

bqcommand-line tool. - Submit a JSON schema file using the

bqcommand-line tool. - Provide the schema in a table resource when calling the API's

tables.insertmethod.

For more information about specifying a table schema, see Specifying a schema.

After the table is created, you can load data into it or populate it by writing query results to it.

To create an empty table with a schema definition:

Console

-

In the Cloud Console, open the BigQuery page.

Go to BigQuery

-

In the Explorer panel, expand your project and select a dataset.

-

Expand the Actions option and click Open.

-

In the details panel, click Create table .

-

On the Create table page, in the Source section, select Empty table.

-

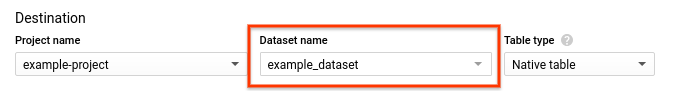

On the Create table page, in the Destination section:

-

For Dataset name, choose the appropriate dataset.

-

In the Table name field, enter the name of the table you're creating in BigQuery.

-

Verify that Table type is set to Native table.

-

-

In the Schema section, enter the schema definition.

-

Enter schema information manually by:

-

Enabling Edit as text and entering the table schema as a JSON array.

-

Using Add field to manually input the schema.

-

-

-

For Partition and cluster settings leave the default value —

No partitioning. -

In the Advanced options section, for Encryption leave the default value:

Google-managed key. By default, BigQuery encrypts customer content stored at rest. -

Click Create table.

SQL

Data definition language (DDL) statements allow you to create and modify tables and views using standard SQL query syntax.

See more on Using data definition language statements.

To create a table in the Cloud Console by using a DDL statement:

-

In the Cloud Console, open the BigQuery page.

Go to BigQuery

-

Click Compose new query.

-

Type your

CREATE TABLEDDL statement into the Query editor text area.The following query creates a table named

newtablethat expires on January 1, 2023. The table description is "a table that expires in 2023", and the table's label isorg_unit:development.CREATE TABLE mydataset.newtable ( x INT64 OPTIONS(description="An optional INTEGER field"), y STRUCT< a ARRAY<STRING> OPTIONS(description="A repeated STRING field"), b BOOL > ) OPTIONS( expiration_timestamp=TIMESTAMP "2023-01-01 00:00:00 UTC", description="a table that expires in 2023", labels=[("org_unit", "development")] ) -

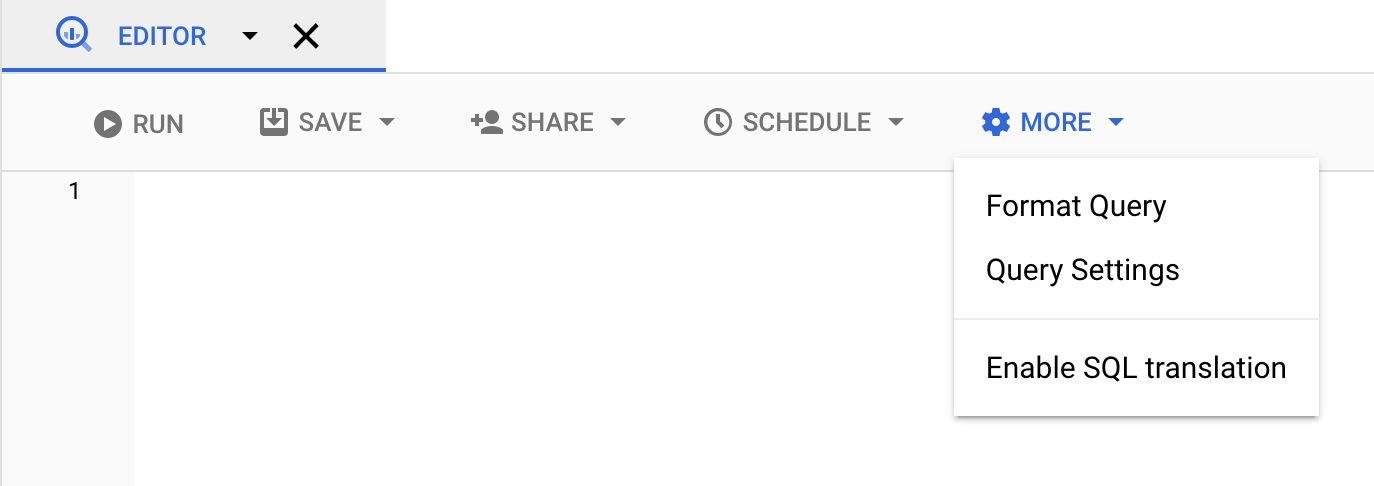

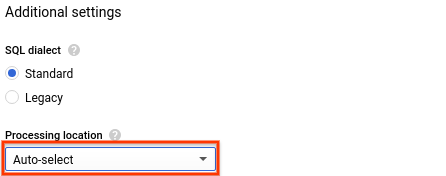

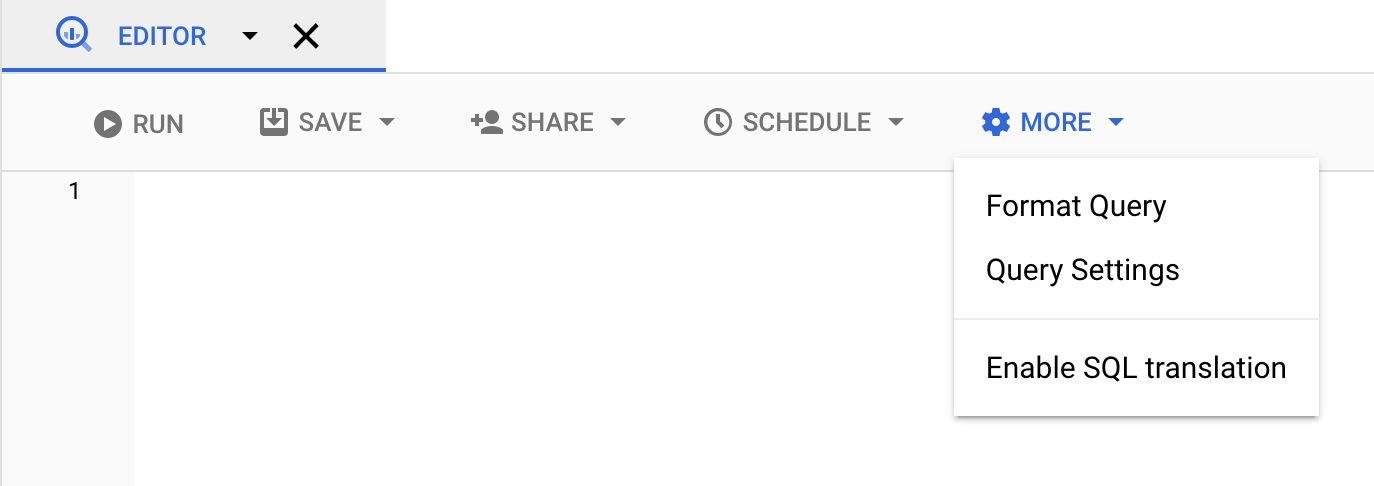

(Optional) Click More and select Query settings.

-

(Optional) For Processing location, click Auto-select and choose your data's location. If you leave processing location set to unspecified, the processing location is automatically detected.

-

Click Run. When the query completes, the table will appear in the Resources pane.

bq

Use the bq mk command with the --table or -t flag. You can supply table schema information inline or via a JSON schema file. Optional parameters include:

-

--expiration -

--description -

--time_partitioning_type -

--destination_kms_key -

--label.

--time_partitioning_type and --destination_kms_key are not demonstrated here. For more information about --time_partitioning_type, see partitioned tables. For more information about --destination_kms_key, see customer-managed encryption keys.

If you are creating a table in a project other than your default project, add the project ID to the dataset in the following format: project_id:dataset.

To create an empty table in an existing dataset with a schema definition, enter the following:

bq mk \ --table \ --expiration integer \ --description description \ --label key_1:value_1 \ --label key_2:value_2 \ project_id:dataset.table \ schema

Replace the following:

- integer is the default lifetime (in seconds) for the table. The minimum value is 3600 seconds (one hour). The expiration time evaluates to the current UTC time plus the integer value. If you set the expiration time when you create a table, the dataset's default table expiration setting is ignored.

- description is a description of the table in quotes.

- key_1:value_1 and key_2:value_2 are key-value pairs that specify labels.

- project_id is your project ID.

- dataset is a dataset in your project.

- table is the name of the table you're creating.

- schema is an inline schema definition in the format field:data_type,field:data_type or the path to the JSON schema file on your local machine.

When you specify the schema on the command line, you cannot include a RECORD (STRUCT) type, you cannot include a column description, and you cannot specify the column's mode. All modes default to NULLABLE. To include descriptions, modes, and RECORD types, supply a JSON schema file instead.

Examples:

Enter the following command to create a table using an inline schema definition. This command creates a table named mytable in mydataset in your default project. The table expiration is set to 3600 seconds (1 hour), the description is set to This is my table, and the label is set to organization:development. The command uses the -t shortcut instead of --table. The schema is specified inline as: qtr:STRING,sales:FLOAT,year:STRING.

bq mk \ -t \ --expiration 3600 \ --description "This is my table" \ --label organization:development \ mydataset.mytable \ qtr:STRING,sales:FLOAT,year:STRING

Enter the following command to create a table using a JSON schema file. This command creates a table named mytable in mydataset in your default project. The table expiration is set to 3600 seconds (1 hour), the description is set to This is my table, and the label is set to organization:development. The path to the schema file is /tmp/myschema.json.

bq mk \ --table \ --expiration 3600 \ --description "This is my table" \ --label organization:development \ mydataset.mytable \ /tmp/myschema.json

Enter the following command to create a table using an JSON schema file. This command creates a table named mytable in mydataset in myotherproject. The table expiration is set to 3600 seconds (1 hour), the description is set to This is my table, and the label is set to organization:development. The path to the schema file is /tmp/myschema.json.

bq mk \ --table \ --expiration 3600 \ --description "This is my table" \ --label organization:development \ myotherproject:mydataset.mytable \ /tmp/myschema.json

After the table is created, you can update the table's expiration, description, and labels. You can also modify the schema definition.

API

Call the tables.insert method with a defined table resource.

C#

Before trying this sample, follow the C# setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery C# API reference documentation.

Go

Before trying this sample, follow the Go setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Go API reference documentation.

Java

Before trying this sample, follow the Java setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Java API reference documentation.

Node.js

Before trying this sample, follow the Node.js setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Node.js API reference documentation.

PHP

Before trying this sample, follow the PHP setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery PHP API reference documentation.

Python

Before trying this sample, follow the Python setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Python API reference documentation.

Ruby

Before trying this sample, follow the Ruby setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Ruby API reference documentation.

Creating an empty table without a schema definition

Creating a table from a query result

To create a table from a query result, write the results to a destination table.

Console

-

Open the BigQuery page in the Cloud Console.

Go to the BigQuery page

-

In the Explorer panel, expand your project and select a dataset.

-

If the query editor is hidden, click Show editor at the top right of the window.

-

Enter a valid SQL query in the Query editor text area.

-

Click More and then select Query options.

-

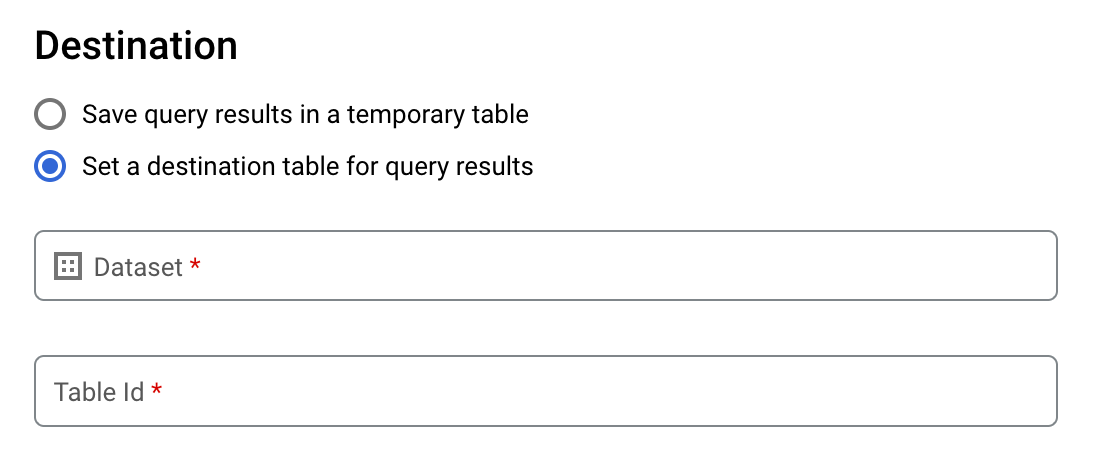

Check the box to Set a destination table for query results.

-

In the Destination section, select the appropriate Project name and Dataset name where the table will be created, and choose a Table name.

-

In the Destination table write preference section, choose one of the following:

- Write if empty — Writes the query results to the table only if the table is empty.

- Append to table — Appends the query results to an existing table.

- Overwrite table — Overwrites an existing table with the same name using the query results.

-

(Optional) For Processing location, click Auto-select and choose your location.

-

Click Run query. This creates a query job that writes the query results to the table you specified.

Alternatively, if you forget to specify a destination table before running your query, you can copy the cached results table to a permanent table by clicking the Save Results button below the editor.

SQL

Data definition language (DDL) statements allow you to create and modify tables using standard SQL query syntax.

For more information, see the CREATE TABLE statement page and the CREATE TABLE example: Creating a new table from an existing table.

bq

Enter the bq query command and specify the --destination_table flag to create a permanent table based on the query results. Specify the use_legacy_sql=false flag to use standard SQL syntax. To write the query results to a table that is not in your default project, add the project ID to the dataset name in the following format: project_id:dataset .

(Optional) Supply the --location flag and set the value to your location.

To control the write disposition for an existing destination table, specify one of the following optional flags:

-

--append_table: If the destination table exists, the query results are appended to it. -

--replace: If the destination table exists, it is overwritten with the query results.

bq --location=location query \ --destination_table project_id:dataset.table \ --use_legacy_sql=false 'query'

Replace the following:

-

locationis the name of the location used to process the query. The--locationflag is optional. For example, if you are using BigQuery in the Tokyo region, you can set the flag's value toasia-northeast1. You can set a default value for the location by using the.bigqueryrcfile. -

project_idis your project ID. -

datasetis the name of the dataset that contains the table to which you are writing the query results. -

tableis the name of the table to which you're writing the query results. -

queryis a query in standard SQL syntax.

If no write disposition flag is specified, the default behavior is to write the results to the table only if it is empty. If the table exists and it is not empty, the following error is returned: `BigQuery error in query operation: Error processing job project_id:bqjob_123abc456789_00000e1234f_1': Already Exists: Table project_id:dataset.table .

Examples:

Enter the following command to write query results to a destination table named mytable in mydataset. The dataset is in your default project. Since no write disposition flag is specified in the command, the table must be new or empty. Otherwise, an Already exists error is returned. The query retrieves data from the USA Name Data public dataset.

bq query \ --destination_table mydataset.mytable \ --use_legacy_sql=false \ 'SELECT name, number FROM `bigquery-public-data`.usa_names.usa_1910_current WHERE gender = "M" ORDER BY number DESC'

Enter the following command to use query results to overwrite a destination table named mytable in mydataset. The dataset is in your default project. The command uses the --replace flag to overwrite the destination table.

bq query \ --destination_table mydataset.mytable \ --replace \ --use_legacy_sql=false \ 'SELECT name, number FROM `bigquery-public-data`.usa_names.usa_1910_current WHERE gender = "M" ORDER BY number DESC'

Enter the following command to append query results to a destination table named mytable in mydataset. The dataset is in my-other-project, not your default project. The command uses the --append_table flag to append the query results to the destination table.

bq query \ --append_table \ --use_legacy_sql=false \ --destination_table my-other-project:mydataset.mytable \ 'SELECT name, number FROM `bigquery-public-data`.usa_names.usa_1910_current WHERE gender = "M" ORDER BY number DESC'

The output for each of these examples looks like the following. For readability, some output is truncated.

Waiting on bqjob_r123abc456_000001234567_1 ... (2s) Current status: DONE +---------+--------+ | name | number | +---------+--------+ | Robert | 10021 | | John | 9636 | | Robert | 9297 | | ... | +---------+--------+

API

To save query results to a permanent table, call the jobs.insert method, configure a query job, and include a value for the destinationTable property. To control the write disposition for an existing destination table, configure the writeDisposition property.

To control the processing location for the query job, specify the location property in the jobReference section of the job resource.

Go

Before trying this sample, follow the Go setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Go API reference documentation.

Java

Before trying this sample, follow the Java setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Java API reference documentation.

To save query results to a permanent table, set the destination table to the desired TableId in a QueryJobConfiguration.

Node.js

Before trying this sample, follow the Node.js setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Node.js API reference documentation.

Python

Before trying this sample, follow the Python setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Python API reference documentation.

To save query results to a permanent table, create a QueryJobConfig and set the destination to the desired TableReference. Pass the job configuration to the query method.Creating a table that references an external data source

An external data source is a data source that you can query directly from BigQuery, even though the data is not stored in BigQuery storage.

BigQuery supports the following external data sources:

- Bigtable

- Cloud Spanner

- Cloud SQL

- Cloud Storage

- Drive

For more information, see Introduction to external data sources.

Creating a table when you load data

When you load data into BigQuery, you can load data into a new table or partition, you can append data to an existing table or partition, or you can overwrite a table or partition. You do not need to create an empty table before loading data into it. You can create the new table and load your data at the same time.

When you load data into BigQuery, you can supply the table or partition schema, or for supported data formats, you can use schema auto-detection.

For more information about loading data, see Introduction to loading data into BigQuery.

Controlling access to tables

To configure access to tables and views, you can grant an IAM role to an entity at the following levels, listed in order of range of resources allowed (largest to smallest):

- a high level in the Google Cloud resource hierarchy such as the project, folder, or organization level

- the dataset level

- the table/view level

You can also restrict access to data within tables, by using different methods:

- column-level security

- row-level security

Access with any resource protected by IAM is additive. For example, if an entity does not have access at the high level such as a project, you could grant the entity access at the dataset level, and then the entity will have access to the tables and views in the dataset. Similarly, if the entity does not have access at the high level or the dataset level, you could grant the entity access at the table or view level.

Granting IAM roles at a higher level in the Google Cloud resource hierarchy such as the project, folder, or organization level gives the entity access to a broad set of resources. For example, granting a role to an entity at the project level gives that entity permissions that apply to all datasets throughout the project.

Granting a role at the dataset level specifies the operations an entity is allowed to perform on tables and views in that specific dataset, even if the entity does not have access at a higher level. For information on configuring dataset-level access controls, see Controlling access to datasets.

Granting a role at the table or view level specifies the operations an entity is allowed to perform on specific tables and views, even if the entity does not have access at a higher level. For information on configuring table-level access controls, see Controlling access to tables and views.

You can also create IAM custom roles. If you create a custom role, the permissions you grant depend on the specific operations you want the entity to be able to perform.

You can't set a "deny" permission on any resource protected by IAM.

For more information about roles and permissions, see:

- Understanding roles in the IAM documentation

- BigQuery Predefined roles and permissions

For more information about control access to resources and data, see the following:

- Controlling access to datasets

- Controlling access to tables and views

- Restricting access with column-level security

- Introduction to row-level security

Using tables

Getting information about tables

You can get information or metadata about tables in the following ways:

- Using the Cloud Console.

- Using the

bqcommand-line toolbq showcommand. - Calling the

tables.getAPI method. - Using the client libraries.

- Querying the

INFORMATION_SCHEMAviews (beta).

Required permissions

At a minimum, to get information about tables, you must be granted bigquery.tables.get permissions. The following predefined IAM roles include bigquery.tables.get permissions:

-

bigquery.metadataViewer -

bigquery.dataViewer -

bigquery.dataOwner -

bigquery.dataEditor -

bigquery.admin

In addition, if a user has bigquery.datasets.create permissions, when that user creates a dataset, they are granted bigquery.dataOwner access to it. bigquery.dataOwner access gives the user the ability to retrieve table metadata.

For more information on IAM roles and permissions in BigQuery, see Access control.

Getting table information

To get information about tables:

Console

-

In the navigation panel, in the Resources section, expand your project and select a dataset. Click the dataset name to expand it. This displays the tables and views in the dataset.

-

Click the table name.

-

Below the editor, click Details. This page displays the table's description and table information.

-

Click the Schema tab to view the table's schema definition.

bq

Issue the bq show command to display all table information. Use the --schema flag to display only table schema information. The --format flag can be used to control the output.

If you are getting information about a table in a project other than your default project, add the project ID to the dataset in the following format: project_id:dataset.

bq show \ --schema \ --format=prettyjson \ project_id:dataset.table

Where:

- project_id is your project ID.

- dataset is the name of the dataset.

- table is the name of the table.

Examples:

Enter the following command to display all information about mytable in mydataset. mydataset is in your default project.

bq show --format=prettyjson mydataset.mytable

Enter the following command to display all information about mytable in mydataset. mydataset is in myotherproject, not your default project.

bq show --format=prettyjson myotherproject:mydataset.mytable

Enter the following command to display only schema information about mytable in mydataset. mydataset is in myotherproject, not your default project.

bq show --schema --format=prettyjson myotherproject:mydataset.mytable

API

Call the tables.get method and provide any relevant parameters.

Go

Before trying this sample, follow the Go setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Go API reference documentation.

Java

Before trying this sample, follow the Java setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Java API reference documentation.

Node.js

Before trying this sample, follow the Node.js setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Node.js API reference documentation.

PHP

Before trying this sample, follow the PHP setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery PHP API reference documentation.

Python

Before trying this sample, follow the Python setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Python API reference documentation.

Getting table information using INFORMATION_SCHEMA (beta)

INFORMATION_SCHEMA is a series of views that provide access to metadata about datasets, routines, tables, views, jobs, reservations, and streaming data.

You can query the INFORMATION_SCHEMA.TABLES and INFORMATION_SCHEMA.TABLE_OPTIONS views to retrieve metadata about tables and views in a project. You can also query the INFORMATION_SCHEMA.COLUMNS and INFORMATION_SCHEMA.COLUMN_FIELD_PATHS views to retrieve metadata about the columns (fields) in a table.

The TABLES and TABLE_OPTIONS views also contain high-level information about views. For detailed information, query the INFORMATION_SCHEMA.VIEWS view instead.

TABLES view

When you query the INFORMATION_SCHEMA.TABLES view, the query results contain one row for each table or view in a dataset.

The INFORMATION_SCHEMA.TABLES view has the following schema:

| Column name | Data type | Value |

|---|---|---|

TABLE_CATALOG | STRING | The project ID of the project that contains the dataset |

TABLE_SCHEMA | STRING | The name of the dataset that contains the table or view also referred to as the datasetId |

TABLE_NAME | STRING | The name of the table or view also referred to as the tableId |

TABLE_TYPE | STRING | The table type:

|

IS_INSERTABLE_INTO | STRING | YES or NO depending on whether the table supports DML INSERT statements |

IS_TYPED | STRING | The value is always NO |

CREATION_TIME | TIMESTAMP | The table's creation time |

DDL | STRING | The DDL statement that can be used to recreate the table, such as CREATE TABLE or CREATE VIEW |

Examples

Example 1:

The following example retrieves table metadata for all of the tables in the dataset named mydataset. The query selects all of the columns from the INFORMATION_SCHEMA.TABLES view except for is_typed, which is reserved for future use, and ddl, which is hidden from SELECT * queries. The metadata returned is for all tables in mydataset in your default project.

mydataset contains the following tables:

-

mytable1: a standard BigQuery table -

myview1: a BigQuery view

To run the query against a project other than your default project, add the project ID to the dataset in the following format: `project_id`.dataset.INFORMATION_SCHEMA.view ; for example, `myproject`.mydataset.INFORMATION_SCHEMA.TABLES.

To run the query:

Console

-

Open the BigQuery page in the Cloud Console.

Go to the BigQuery page

-

Enter the following standard SQL query in the Query editor box.

INFORMATION_SCHEMArequires standard SQL syntax. Standard SQL is the default syntax in the Cloud Console.SELECT * EXCEPT(is_typed) FROM mydataset.INFORMATION_SCHEMA.TABLES

-

Click Run.

bq

Use the bq query command and specify standard SQL syntax by using the --nouse_legacy_sql or --use_legacy_sql=false flag. Standard SQL syntax is required for INFORMATION_SCHEMA queries.

To run the query, enter:

bq query --nouse_legacy_sql \ 'SELECT * EXCEPT(is_typed) FROM mydataset.INFORMATION_SCHEMA.TABLES'

The results should look like the following:

+----------------+---------------+----------------+------------+--------------------+---------------------+---------------------------------------------+ | table_catalog | table_schema | table_name | table_type | is_insertable_into | creation_time | ddl | +----------------+---------------+----------------+------------+--------------------+---------------------+---------------------------------------------+ | myproject | mydataset | mytable1 | BASE TABLE | YES | 2018-10-29 20:34:44 | CREATE TABLE `myproject.mydataset.mytable1` | | | | | | | | ( | | | | | | | | id INT64 | | | | | | | | ); | | myproject | mydataset | myview1 | VIEW | NO | 2018-12-29 00:19:20 | CREATE VIEW `myproject.mydataset.myview1` | | | | | | | | AS SELECT 100 as id; | +----------------+---------------+----------------+------------+--------------------+---------------------+---------------------------------------------+

Example 2:

The following example retrieves all tables of type BASE TABLE from the INFORMATION_SCHEMA.TABLES view. The is_typed column is excluded, and ddl column is hidden. The metadata returned is for tables in mydataset in your default project.

To run the query against a project other than your default project, add the project ID to the dataset in the following format: `project_id`.dataset.INFORMATION_SCHEMA.view ; for example, `myproject`.mydataset.INFORMATION_SCHEMA.TABLES.

To run the query:

Console

-

Open the BigQuery page in the Cloud Console.

Go to the BigQuery page

-

Enter the following standard SQL query in the Query editor box.

INFORMATION_SCHEMArequires standard SQL syntax. Standard SQL is the default syntax in the Cloud Console.SELECT * EXCEPT(is_typed) FROM mydataset.INFORMATION_SCHEMA.TABLES WHERE table_type="BASE TABLE"

-

Click Run.

bq

Use the bq query command and specify standard SQL syntax by using the --nouse_legacy_sql or --use_legacy_sql=false flag. Standard SQL syntax is required for INFORMATION_SCHEMA queries.

To run the query, enter:

bq query --nouse_legacy_sql \ 'SELECT * EXCEPT(is_typed) FROM mydataset.INFORMATION_SCHEMA.TABLES WHERE table_type="BASE TABLE"'

The results should look like the following:

+----------------+---------------+----------------+------------+--------------------+---------------------+---------------------------------------------+ | table_catalog | table_schema | table_name | table_type | is_insertable_into | creation_time | ddl | +----------------+---------------+----------------+------------+--------------------+---------------------+---------------------------------------------+ | myproject | mydataset | mytable1 | BASE TABLE | YES | 2018-10-31 22:40:05 | CREATE TABLE myproject.mydataset.mytable1 | | | | | | | | ( | | | | | | | | id INT64 | | | | | | | | ); | +----------------+---------------+----------------+------------+--------------------+---------------------+---------------------------------------------+ Example 3:

The following example retrieves table_name and ddl columns from the INFORMATION_SCHEMA.TABLES view for the population_by_zip_2010 table in the census_bureau_usa dataset. This dataset is part of the BigQuery public dataset program.

Because the table you're querying is in another project, you add the project ID to the dataset in the following format: `project_id`.dataset.INFORMATION_SCHEMA.view . In this example, the value is `bigquery-public-data`.census_bureau_usa.INFORMATION_SCHEMA.TABLES.

To run the query:

Console

-

Open the BigQuery page in the Cloud Console.

Go to the BigQuery page

-

Enter the following standard SQL query in the Query editor box.

INFORMATION_SCHEMArequires standard SQL syntax. Standard SQL is the default syntax in the Cloud Console.SELECT table_name, ddl FROM `bigquery-public-data`.census_bureau_usa.INFORMATION_SCHEMA.TABLES WHERE table_name="population_by_zip_2010"

-

Click Run.

bq

Use the bq query command and specify standard SQL syntax by using the --nouse_legacy_sql or --use_legacy_sql=false flag. Standard SQL syntax is required for INFORMATION_SCHEMA queries.

To run the query, enter:

bq query --nouse_legacy_sql \ 'SELECT table_name, ddl FROM `bigquery-public-data`.census_bureau_usa.INFORMATION_SCHEMA.TABLES WHERE table_name="population_by_zip_2010"'

The results should look like the following:

+------------------------+----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+ | table_name | ddl | +------------------------+----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+ | population_by_zip_2010 | CREATE TABLE `bigquery-public-data.census_bureau_usa.population_by_zip_2010` | | | ( | | | geo_id STRING OPTIONS(description="Geo code"), | | | zipcode STRING NOT NULL OPTIONS(description="Five digit ZIP Code Tabulation Area Census Code"), | | | population INT64 OPTIONS(description="The total count of the population for this segment."), | | | minimum_age INT64 OPTIONS(description="The minimum age in the age range. If null, this indicates the row as a total for male, female, or overall population."), | | | maximum_age INT64 OPTIONS(description="The maximum age in the age range. If null, this indicates the row as having no maximum (such as 85 and over) or the row is a total of the male, female, or overall population."), | | | gender STRING OPTIONS(description="male or female. If empty, the row is a total population summary.") | | | ) | | | OPTIONS( | | | labels=[("freebqcovid", "")] | | | ); | +------------------------+----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+ TABLE_OPTIONS view

When you query the INFORMATION_SCHEMA.TABLE_OPTIONS view, the query results contain one row for each table or view in a dataset.

The INFORMATION_SCHEMA.TABLE_OPTIONS view has the following schema:

| Column name | Data type | Value |

|---|---|---|

TABLE_CATALOG | STRING | The project ID of the project that contains the dataset |

TABLE_SCHEMA | STRING | The name of the dataset that contains the table or view also referred to as the datasetId |

TABLE_NAME | STRING | The name of the table or view also referred to as the tableId |

OPTION_NAME | STRING | One of the name values in the options table |

OPTION_TYPE | STRING | One of the data type values in the options table |

OPTION_VALUE | STRING | One of the value options in the options table |

Options table

OPTION_NAME | OPTION_TYPE | OPTION_VALUE |

|---|---|---|

partition_expiration_days | FLOAT64 | The default lifetime, in days, of all partitions in a partitioned table |

expiration_timestamp | FLOAT64 | The time when this table expires |

kms_key_name | STRING | The name of the Cloud KMS key used to encrypt the table |

friendly_name | STRING | The table's descriptive name |

description | STRING | A description of the table |

labels | ARRAY<STRUCT<STRING, STRING>> | An array of STRUCT's that represent the labels on the table |

require_partition_filter | BOOL | Whether queries over the table require a partition filter |

enable_refresh | BOOL | Whether automatic refresh is enabled for a materialized view |

refresh_interval_minutes | FLOAT64 | How frequently a materialized view is refreshed |

For external tables, the following options are also possible:

| Options | |

|---|---|

allow_jagged_rows | If Applies to CSV data. |

allow_quoted_newlines | If Applies to CSV data. |

compression | The compression type of the data source. Supported values include: Applies to CSV and JSON data. |

enable_logical_types | If Applies to Avro data. |

encoding | The character encoding of the data. Supported values include: Applies to CSV data. |

field_delimiter | The separator for fields in a CSV file. Applies to CSV data. |

format | The format of the external data. Supported values include: The value |

decimal_target_types | Determines how to convert a Example: |

json_extension | For JSON data, indicates a particular JSON interchange format. If not specified, BigQuery reads the data as generic JSON records. Supported values include: |

hive_partition_uri_prefix | A common prefix for all source URIs before the partition key encoding begins. Applies only to hive-partitioned external tables. Applies to Avro, CSV, JSON, Parquet, and ORC data. Example: |

ignore_unknown_values | If Applies to CSV and JSON data. |

max_bad_records | The maximum number of bad records to ignore when reading the data. Applies to: CSV, JSON, and Sheets data. |

null_marker | The string that represents Applies to CSV data. |

projection_fields | A list of entity properties to load. Applies to Datastore data. |

quote | The string used to quote data sections in a CSV file. If your data contains quoted newline characters, also set the Applies to CSV data. |

require_hive_partition_filter | If Applies to Avro, CSV, JSON, Parquet, and ORC data. |

sheet_range | Range of a Sheets spreadsheet to query from. Applies to Sheets data. Example: |

skip_leading_rows | The number of rows at the top of a file to skip when reading the data. Applies to CSV and Sheets data. |

uris | An array of fully qualified URIs for the external data locations. Example: |

Examples

Example 1:

The following example retrieves the default table expiration times for all tables in mydataset in your default project (myproject) by querying the INFORMATION_SCHEMA.TABLE_OPTIONS view.

To run the query against a project other than your default project, add the project ID to the dataset in the following format: `project_id`.dataset.INFORMATION_SCHEMA.view ; for example, `myproject`.mydataset.INFORMATION_SCHEMA.TABLE_OPTIONS.

To run the query:

Console

-

Open the BigQuery page in the Cloud Console.

Go to the BigQuery page

-

Enter the following standard SQL query in the Query editor box.

INFORMATION_SCHEMArequires standard SQL syntax. Standard SQL is the default syntax in the Cloud Console.SELECT * FROM mydataset.INFORMATION_SCHEMA.TABLE_OPTIONS WHERE option_name="expiration_timestamp"

-

Click Run.

bq

Use the bq query command and specify standard SQL syntax by using the --nouse_legacy_sql or --use_legacy_sql=false flag. Standard SQL syntax is required for INFORMATION_SCHEMA queries.

To run the query, enter:

bq query --nouse_legacy_sql \ 'SELECT * FROM mydataset.INFORMATION_SCHEMA.TABLE_OPTIONS WHERE option_name="expiration_timestamp"'

The results should look like the following:

+----------------+---------------+------------+----------------------+-------------+--------------------------------------+ | table_catalog | table_schema | table_name | option_name | option_type | option_value | +----------------+---------------+------------+----------------------+-------------+--------------------------------------+ | myproject | mydataset | mytable1 | expiration_timestamp | TIMESTAMP | TIMESTAMP "2020-01-16T21:12:28.000Z" | | myproject | mydataset | mytable2 | expiration_timestamp | TIMESTAMP | TIMESTAMP "2021-01-01T21:12:28.000Z" | +----------------+---------------+------------+----------------------+-------------+--------------------------------------+

Example 2:

The following example retrieves metadata about all tables in mydataset that contain test data. The query uses the values in the description option to find tables that contain "test" anywhere in the description. mydataset is in your default project — myproject.

To run the query against a project other than your default project, add the project ID to the dataset in the following format: `project_id`.dataset.INFORMATION_SCHEMA.view ; for example, `myproject`.mydataset.INFORMATION_SCHEMA.TABLE_OPTIONS.

To run the query:

Console

-

Open the BigQuery page in the Cloud Console.

Go to the BigQuery page

-

Enter the following standard SQL query in the Query editor box.

INFORMATION_SCHEMArequires standard SQL syntax. Standard SQL is the default syntax in the Cloud Console.SELECT * FROM mydataset.INFORMATION_SCHEMA.TABLE_OPTIONS WHERE option_name="description" AND option_value LIKE "%test%"

-

Click Run.

bq

Use the bq query command and specify standard SQL syntax by using the --nouse_legacy_sql or --use_legacy_sql=false flag. Standard SQL syntax is required for INFORMATION_SCHEMA queries.

To run the query, enter:

bq query --nouse_legacy_sql \ 'SELECT * FROM mydataset.INFORMATION_SCHEMA.TABLE_OPTIONS WHERE option_name="description" AND option_value LIKE "%test%"'

The results should look like the following:

+----------------+---------------+------------+-------------+-------------+--------------+ | table_catalog | table_schema | table_name | option_name | option_type | option_value | +----------------+---------------+------------+-------------+-------------+--------------+ | myproject | mydataset | mytable1 | description | STRING | "test data" | | myproject | mydataset | mytable2 | description | STRING | "test data" | +----------------+---------------+------------+-------------+-------------+--------------+

COLUMNS view

When you query the INFORMATION_SCHEMA.COLUMNS view, the query results contain one row for each column (field) in a table.

The INFORMATION_SCHEMA.COLUMNS view has the following schema:

| Column name | Data type | Value |

|---|---|---|

TABLE_CATALOG | STRING | The project ID of the project that contains the dataset |

TABLE_SCHEMA | STRING | The name of the dataset that contains the table also referred to as the datasetId |

TABLE_NAME | STRING | The name of the table or view also referred to as the tableId |

COLUMN_NAME | STRING | The name of the column |

ORDINAL_POSITION | INT64 | The 1-indexed offset of the column within the table; if it's a pseudo column such as _PARTITIONTIME or _PARTITIONDATE, the value is NULL |

IS_NULLABLE | STRING | YES or NO depending on whether the column's mode allows NULL values |

DATA_TYPE | STRING | The column's standard SQL data type |

IS_GENERATED | STRING | The value is always NEVER |

GENERATION_EXPRESSION | STRING | The value is always NULL |

IS_STORED | STRING | The value is always NULL |

IS_HIDDEN | STRING | YES or NO depending on whether the column is a pseudo column such as _PARTITIONTIME or _PARTITIONDATE |

IS_UPDATABLE | STRING | The value is always NULL |

IS_SYSTEM_DEFINED | STRING | YES or NO depending on whether the column is a pseudo column such as _PARTITIONTIME or _PARTITIONDATE |

IS_PARTITIONING_COLUMN | STRING | YES or NO depending on whether the column is a partitioning column |

CLUSTERING_ORDINAL_POSITION | INT64 | The 1-indexed offset of the column within the table's clustering columns; the value is NULL if the table is not a clustered table |

Examples

The following example retrieves metadata from the INFORMATION_SCHEMA.COLUMNS view for the population_by_zip_2010 table in the census_bureau_usa dataset. This dataset is part of the BigQuery public dataset program.

Because the table you're querying is in another project, the bigquery-public-data project, you add the project ID to the dataset in the following format: `project_id`.dataset.INFORMATION_SCHEMA.view ; for example, `bigquery-public-data`.census_bureau_usa.INFORMATION_SCHEMA.TABLES.

The following columns are excluded from the query results because they are currently reserved for future use:

-

IS_GENERATED -

GENERATION_EXPRESSION -

IS_STORED -

IS_UPDATABLE

To run the query:

Console

-

Open the BigQuery page in the Cloud Console.

Go to the BigQuery page

-

Enter the following standard SQL query in the Query editor box.

INFORMATION_SCHEMArequires standard SQL syntax. Standard SQL is the default syntax in the Cloud Console.SELECT * EXCEPT(is_generated, generation_expression, is_stored, is_updatable) FROM `bigquery-public-data`.census_bureau_usa.INFORMATION_SCHEMA.COLUMNS WHERE table_name="population_by_zip_2010"

-

Click Run.

bq

Use the bq query command and specify standard SQL syntax by using the --nouse_legacy_sql or --use_legacy_sql=false flag. Standard SQL syntax is required for INFORMATION_SCHEMA queries.

To run the query, enter:

bq query --nouse_legacy_sql \ 'SELECT * EXCEPT(is_generated, generation_expression, is_stored, is_updatable) FROM `bigquery-public-data`.census_bureau_usa.INFORMATION_SCHEMA.COLUMNS WHERE table_name="population_by_zip_2010"'

The results should look like the following. For readability, table_catalog and table_schema are excluded from the results:

+------------------------+-------------+------------------+-------------+-----------+-----------+-------------------+------------------------+-----------------------------+ | table_name | column_name | ordinal_position | is_nullable | data_type | is_hidden | is_system_defined | is_partitioning_column | clustering_ordinal_position | +------------------------+-------------+------------------+-------------+-----------+-----------+-------------------+------------------------+-----------------------------+ | population_by_zip_2010 | zipcode | 1 | NO | STRING | NO | NO | NO | NULL | | population_by_zip_2010 | geo_id | 2 | YES | STRING | NO | NO | NO | NULL | | population_by_zip_2010 | minimum_age | 3 | YES | INT64 | NO | NO | NO | NULL | | population_by_zip_2010 | maximum_age | 4 | YES | INT64 | NO | NO | NO | NULL | | population_by_zip_2010 | gender | 5 | YES | STRING | NO | NO | NO | NULL | | population_by_zip_2010 | population | 6 | YES | INT64 | NO | NO | NO | NULL | +------------------------+-------------+------------------+-------------+-----------+-----------+-------------------+------------------------+-----------------------------+

COLUMN_FIELD_PATHS view

When you query the INFORMATION_SCHEMA.COLUMN_FIELD_PATHS view, the query results contain one row for each column nested within a RECORD (or STRUCT) column.

The INFORMATION_SCHEMA.COLUMN_FIELD_PATHS view has the following schema:

| Column name | Data type | Value |

|---|---|---|

TABLE_CATALOG | STRING | The project ID of the project that contains the dataset |

TABLE_SCHEMA | STRING | The name of the dataset that contains the table also referred to as the datasetId |

TABLE_NAME | STRING | The name of the table or view also referred to as the tableId |

COLUMN_NAME | STRING | The name of the column |

FIELD_PATH | STRING | The path to a column nested within a `RECORD` or `STRUCT` column |

DATA_TYPE | STRING | The column's standard SQL data type |

DESCRIPTION | STRING | The column's description |

Examples

The following example retrieves metadata from the INFORMATION_SCHEMA.COLUMN_FIELD_PATHS view for the commits table in the github_repos dataset. This dataset is part of the BigQuery public dataset program.

Because the table you're querying is in another project, the bigquery-public-data project, you add the project ID to the dataset in the following format: `project_id`.dataset.INFORMATION_SCHEMA.view ; for example, `bigquery-public-data`.github_repos.INFORMATION_SCHEMA.COLUMN_FIELD_PATHS.

The commits table contains the following nested and nested and repeated columns:

-

author: nestedRECORDcolumn -

committer: nestedRECORDcolumn -

trailer: nested and repeatedRECORDcolumn -

difference: nested and repeatedRECORDcolumn

Your query will retrieve metadata about the author and difference columns.

To run the query:

Console

-

Open the BigQuery page in the Cloud Console.

Go to the BigQuery page

-

Enter the following standard SQL query in the Query editor box.

INFORMATION_SCHEMArequires standard SQL syntax. Standard SQL is the default syntax in the Cloud Console.SELECT * FROM `bigquery-public-data`.github_repos.INFORMATION_SCHEMA.COLUMN_FIELD_PATHS WHERE table_name="commits" AND column_name="author" OR column_name="difference"

-

Click Run.

bq

Use the bq query command and specify standard SQL syntax by using the --nouse_legacy_sql or --use_legacy_sql=false flag. Standard SQL syntax is required for INFORMATION_SCHEMA queries.

To run the query, enter:

bq query --nouse_legacy_sql \ 'SELECT * FROM `bigquery-public-data`.github_repos.INFORMATION_SCHEMA.COLUMN_FIELD_PATHS WHERE table_name="commits" AND column_name="author" OR column_name="difference"'

The results should look like the following. For readability, table_catalog and table_schema are excluded from the results.

+------------+-------------+---------------------+-----------------------------------------------------------------------------------------------------------------------------------------------------+-------------+ | table_name | column_name | field_path | data_type | description | +------------+-------------+---------------------+-----------------------------------------------------------------------------------------------------------------------------------------------------+-------------+ | commits | author | author | STRUCT<name STRING, email STRING, time_sec INT64, tz_offset INT64, date TIMESTAMP> | NULL | | commits | author | author.name | STRING | NULL | | commits | author | author.email | STRING | NULL | | commits | author | author.time_sec | INT64 | NULL | | commits | author | author.tz_offset | INT64 | NULL | | commits | author | author.date | TIMESTAMP | NULL | | commits | difference | difference | ARRAY<STRUCT<old_mode INT64, new_mode INT64, old_path STRING, new_path STRING, old_sha1 STRING, new_sha1 STRING, old_repo STRING, new_repo STRING>> | NULL | | commits | difference | difference.old_mode | INT64 | NULL | | commits | difference | difference.new_mode | INT64 | NULL | | commits | difference | difference.old_path | STRING | NULL | | commits | difference | difference.new_path | STRING | NULL | | commits | difference | difference.old_sha1 | STRING | NULL | | commits | difference | difference.new_sha1 | STRING | NULL | | commits | difference | difference.old_repo | STRING | NULL | | commits | difference | difference.new_repo | STRING | NULL | +------------+-------------+---------------------+-----------------------------------------------------------------------------------------------------------------------------------------------------+-------------+

Listing tables in a dataset

You can list tables in datasets in the following ways:

- Using the Cloud Console.

- Using the

bqcommand-line toolbq lscommand. - Calling the

tables.listAPI method. - Using the client libraries.

Required permissions

At a minimum, to list tables in a dataset, you must be granted bigquery.tables.list permissions. The following predefined IAM roles include bigquery.tables.list permissions:

-

bigquery.user -

bigquery.metadataViewer -

bigquery.dataViewer -

bigquery.dataEditor -

bigquery.dataOwner -

bigquery.admin

For more information on IAM roles and permissions in BigQuery, see Access control.

Listing tables

To list the tables in a dataset:

Console

-

In the Cloud Console, in the navigation pane, click your dataset to expand it. This displays the tables and views in the dataset.

-

Scroll through the list to see the tables in the dataset. Tables and views are identified by different icons.

bq

Issue the bq ls command. The --format flag can be used to control the output. If you are listing tables in a project other than your default project, add the project ID to the dataset in the following format: project_id:dataset.

Additional flags include:

-

--max_resultsor-n: An integer indicating the maximum number of results. The default value is50.

bq ls \ --format=pretty \ --max_results integer \ project_id:dataset

Where:

- integer is an integer representing the number of tables to list.

- project_id is your project ID.

- dataset is the name of the dataset.

When you run the command, the Type field displays either TABLE or VIEW. For example:

+-------------------------+-------+----------------------+-------------------+ | tableId | Type | Labels | Time Partitioning | +-------------------------+-------+----------------------+-------------------+ | mytable | TABLE | department:shipping | | | myview | VIEW | | | +-------------------------+-------+----------------------+-------------------+

Examples:

Enter the following command to list tables in dataset mydataset in your default project.

bq ls --format=pretty mydataset

Enter the following command to return more than the default output of 50 tables from mydataset. mydataset is in your default project.

bq ls --format=pretty --max_results 60 mydataset

Enter the following command to list tables in dataset mydataset in myotherproject.

bq ls --format=pretty myotherproject:mydataset

API

To list tables using the API, call the tables.list method.

C#

Before trying this sample, follow the C# setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery C# API reference documentation.

Go

Before trying this sample, follow the Go setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Go API reference documentation.

Java

Before trying this sample, follow the Java setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Java API reference documentation.

Node.js

Before trying this sample, follow the Node.js setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Node.js API reference documentation.

PHP

Before trying this sample, follow the PHP setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery PHP API reference documentation.

Python

Before trying this sample, follow the Python setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Python API reference documentation.

Ruby

Before trying this sample, follow the Ruby setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Ruby API reference documentation.

Table security

To control access to tables in BigQuery, see Introduction to table access controls.

Next steps

- For more information about datasets, see Introduction to datasets.

- For more information about handling table data, see Managing table data.

- For more information about specifying table schemas, see Specifying a schema.

- For more information about modifying table schemas, see Modifying table schemas.

- For more information about managing tables, see Managing tables.

- To see an overview of

INFORMATION_SCHEMA, go to Introduction to BigQueryINFORMATION_SCHEMA.

If you're new to Google Cloud, create an account to evaluate how BigQuery performs in real-world scenarios. New customers also get $300 in free credits to run, test, and deploy workloads.

Try BigQuery free

How To Create A Table Using Sql In Access

Source: https://cloud.google.com/bigquery/docs/tables

Posted by: osbywaye1974.blogspot.com

0 Response to "How To Create A Table Using Sql In Access"

Post a Comment